The Problem We Solve

Rejection rates for myoelectric prostheses remain at 25–40%. The real bottleneck is not mechatronics — it's human-machine interfacing.

The loss of a hand is a debilitating event with long-lasting physical, psychological, and social consequences. While modern bionic limbs are sophisticated systems, the control methods that allow users to effectively exploit their capabilities are still missing.

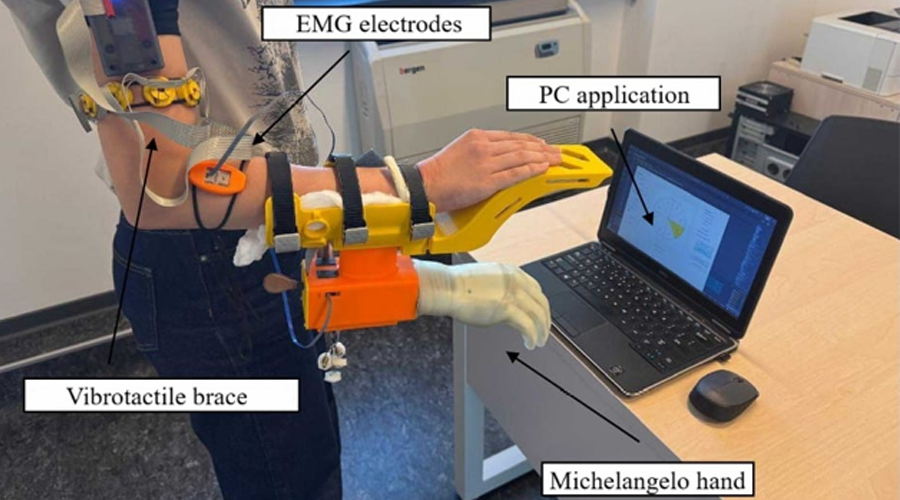

AILIMB advocates a semi-autonomous approach: the prosthesis is equipped with additional sensors and AI so that it can perform some functions automatically, effectively helping the user accomplish tasks with minimal cognitive load. The system combines myoelectric control for voluntary commands, artificial sensory feedback to restore tactile sensations, and automatic control powered by computer vision — all integrated inside a standard prosthesis socket.

Rejection rates for myoelectric prostheses remain at 25–40%. The real bottleneck is not mechatronics — it's human-machine interfacing. AILIMB directly addresses this by enabling intuitive, low-effort control through intelligent sensor fusion and AI.

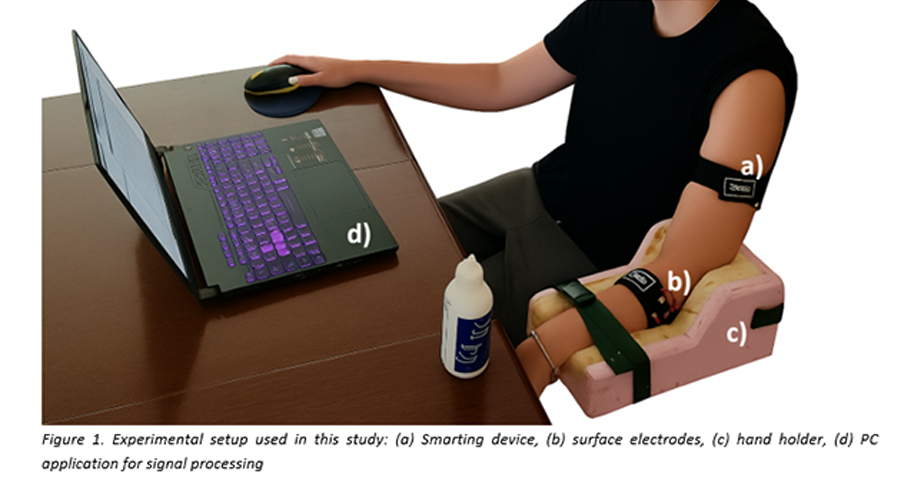

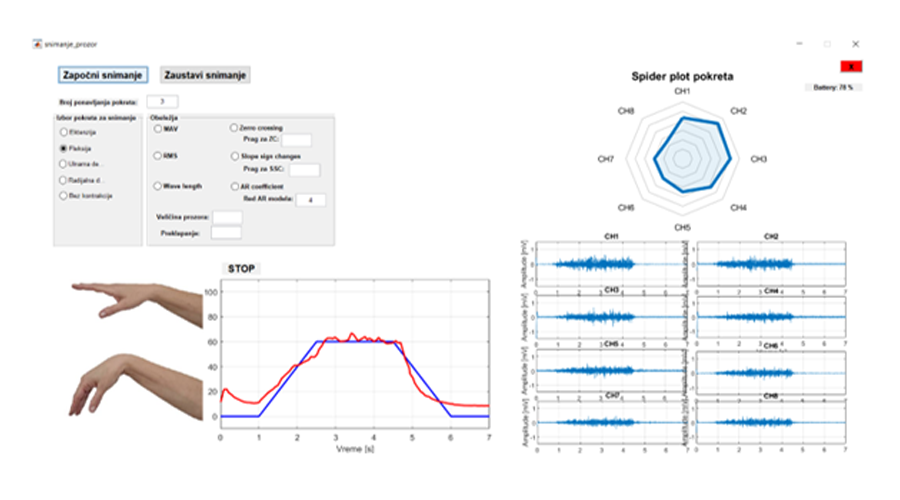

Machine learning classification and regression to recognize user motion intention from EMG data. We designed and tested methods for collecting and analyzing EMG signals, evaluated several pattern recognition algorithms, and obtained initial results showing high accuracy in distinguishing different hand movements and contraction levels. → Success rate >95% for 5 movement classes.

Vibrotactile interface to convey the full state of the Michelangelo prosthesis. We developed vibrotactile feedback methods to convey the full state of the hand, including grasping force, hand aperture, and wrist rotation, and tested them in able-bodied individuals. The results suggest these methods successfully provide intuitive tactile feedback. → User recognition success rate >90%.

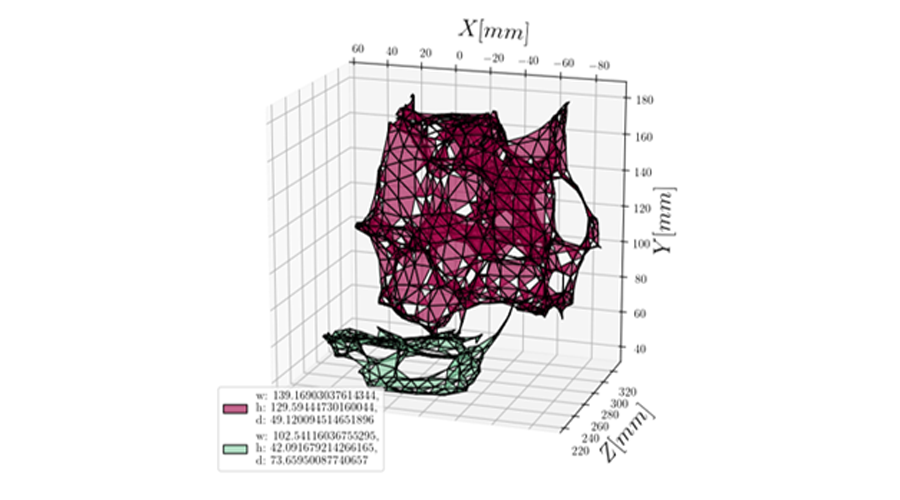

Depth camera + IMU for automatic hand preshaping. Using Time-of-Flight depth sensors, we collected point clouds to examine object recognition of small to medium objects. Our experiments confirm that compact ToF sensors can effectively estimate object properties, even with relatively few data points. → Object size estimation with <10% relative error.

Fusion of volitional EMG commands and automatic control for a semi-autonomous prosthesis. Semi-autonomous fusion combines user-driven EMG control with intelligent automation, ensuring that voluntary commands always take priority. The system enables natural, real-time interaction without explicit mode switching, while automatic control assists by adapting grasp parameters to the target object.

All components are integrated into a wearable socket prototype, with comprehensive assessment planned in both lab and home environments.